To test AI outputs without guesswork, establish clear goals aligned with your objectives, then select specific, measurable metrics like precision, recall, or accuracy. Use structured tools such as confusion matrices, error analysis, and bias audits to assess performance objectively. Evaluate data quality and real-world relevance, and incorporate user feedback for ongoing improvement. Continuously monitor results, address biases, and refine your approach—these strategies will guide you toward more reliable evaluations. If you want to learn more, keep exploring proven methods and best practices.

Key Takeaways

- Use quantitative metrics like precision, recall, and F1 score to objectively evaluate AI outputs.

- Visualize results with confusion matrices and data plots to identify patterns and anomalies.

- Conduct error analysis to pinpoint specific failure modes and areas needing improvement.

- Incorporate user feedback and real-world data to validate performance beyond predefined datasets.

- Regularly benchmark against baseline models and datasets to ensure consistent, unbiased evaluation.

Core Concepts in Evaluating AI Outputs

How do you determine if an AI’s output is truly useful or accurate? The key lies in examining the training datasets and gathering user feedback. Well-designed datasets provide the foundation for reliable AI performance, so guarantee they’re diverse and representative of real-world scenarios. Incorporating data quality standards into dataset evaluation ensures that the training material is relevant and comprehensive. Consistent evaluation against these datasets helps identify strengths and weaknesses. Additionally, analyzing system vulnerabilities can reveal potential points of failure that impact output reliability. Implementing performance metrics tailored to specific tasks can further improve the assessment process. User feedback is equally essential, as it reveals how well the AI meets actual needs and expectations. By analyzing this feedback, you can spot patterns and adjust your evaluation criteria accordingly. Incorporating color accuracy insights from visual content analysis further enhances the assessment process. Combining these core concepts—robust training datasets and insightful user feedback—allows you to assess AI outputs effectively, ensuring they deliver meaningful, precise results that align with your goals. Moreover, adopting automated testing methods can streamline the evaluation process and provide consistent monitoring over time.

How to Set Goals and Metrics for AI Testing

Setting clear goals and metrics is essential to effectively evaluate an AI system’s performance. First, define what success looks like—whether it’s improving data privacy, increasing user engagement, or enhancing accuracy. Metrics should be specific, measurable, and aligned with your objectives. For example, if data privacy is a priority, track compliance rates or incident reports. If user engagement matters, measure session durations, click-through rates, or repeat usage. Establish baseline values to compare future performance. Regularly review and adjust your goals as your AI evolves. Clear metrics help you identify strengths and weaknesses, ensuring your testing remains focused and actionable. By setting precise goals, you’ll confidently gauge whether your AI meets expectations and adheres to important considerations like data privacy and user satisfaction. Additionally, understanding the roles in AI can help you better interpret how different outputs impact overall system performance. Recognizing the silly family photoshoot fails can remind developers to account for unpredictable real-world inputs that may affect AI behavior. Incorporating specific performance metrics related to accuracy and reliability further enhances your evaluation process. Moreover, considering water-related applications can provide insights into how AI systems might be tested in environments involving water or fluid interactions, ensuring robustness in diverse scenarios. It’s also useful to consider how robustness testing can reveal vulnerabilities when AI encounters unexpected or challenging conditions, such as water exposure or complex real-world inputs.

Tools and Techniques to Assess AI Performance

To accurately evaluate an AI system’s performance, you need a range of tools and techniques that provide actionable insights. These help you interpret how well your AI handles natural language tasks and identify areas for improvement. Consider using:

- Performance metrics like precision, recall, and F1 score for quantitative assessment

- Confusion matrices to visualize classification accuracy

- Data visualization tools to spot patterns and anomalies in outputs

- Error analysis to understand where the AI struggles

- Natural language evaluation tools that assess coherence, relevance, and fluency

- Incorporating vintage design principles can also help contextualize AI outputs within a broader aesthetic framework, making performance insights more aligned with your home decor goals. Additionally, understanding ethnicity and background can aid in evaluating the AI’s cultural sensitivity and bias detection. Recognizing the importance of Haute Couture standards can guide the development of AI models in fashion-related applications, ensuring they meet high craftsmanship and exclusivity criteria. Moreover, applying performance benchmarking techniques allows you to compare different models effectively and select the best option for your needs. Using comprehensive evaluation methods can further enhance the reliability of your assessments, ensuring consistent results across different scenarios. These techniques enable you to interpret results effectively, ensuring your AI performs reliably and meets your goals. Using diverse tools, especially data visualization, makes complex performance data easier to understand and act upon.

How to Spot and Address Biases in AI Results

Biases can subtly influence AI results, often leading to unfair or skewed outcomes without your immediate awareness. To detect these biases, examine your data and outputs critically, looking for patterns that favor certain groups or perspectives. Address biases by focusing on algorithm fairness and implementing bias mitigation strategies. Consider the following factors:

| Bias Source | Mitigation Strategy | |

|---|---|---|

| Data imbalance | Diversify training data | |

| Model assumptions | Regular bias audits | |

| Labeling inconsistencies | Standardize annotation processes | Additionally, understanding the underlying data distribution can help identify hidden biases that may not be immediately apparent. Being aware of training data diversity is essential for creating fair AI models that reflect a broad range of perspectives. Recognizing the importance of representative datasets can further enhance fairness and reduce unintended bias in outputs, especially when considering equity in data collection. Considering the impact of data quality is also crucial, as poor data can amplify existing biases and undermine model fairness. |

Interpreting Results and Improving Your AI Models

How can you guarantee that your AI models deliver accurate and reliable results? The key is effectively interpreting results and iteratively improving your models. Start by analyzing performance metrics to identify patterns and weaknesses. Use insights from your training datasets to understand where the model struggles. Incorporate user feedback to pinpoint real-world issues and adjust accordingly. Focus on these strategies:

- Regularly review model outputs against benchmarks

- Fine-tune with diverse, high-quality training data

- Address biases revealed during testing

- Leverage user feedback for targeted improvements

- Continuously monitor performance over time

- Consider the cosmic influences that can subtly impact model behavior and outcomes. Being aware of factors contributing to model bias can help in developing more balanced and fair AI systems. Additionally, understanding the environmental context in which models operate can provide deeper insights into their performance variations. Incorporating robust evaluation methods is essential to ensure reliability across different scenarios.

Frequently Asked Questions

How Often Should AI Outputs Be Reevaluated for Accuracy?

You should reevaluate AI outputs regularly, ideally every few months, to maintain accuracy. Keep an eye on training metrics to spot performance dips and make certain bias mitigation efforts remain effective. Frequent assessment helps you identify issues early, adapt to new data, and improve the model’s reliability. Consistent reevaluation ensures your AI stays aligned with your goals and minimizes errors caused by outdated or biased outputs.

Can AI Evaluation Methods Be Automated Completely?

You can automate AI evaluation methods, including automated scoring and bias detection, to streamline your process. By implementing tools that analyze outputs in real-time, you reduce manual effort and improve consistency. Automated scoring helps evaluate accuracy quickly, while bias detection ensures fairness. Although some aspects may still require human oversight, most evaluation tasks can be markedly automated, making your testing more efficient and reliable.

What Are Common Pitfalls in AI Output Testing?

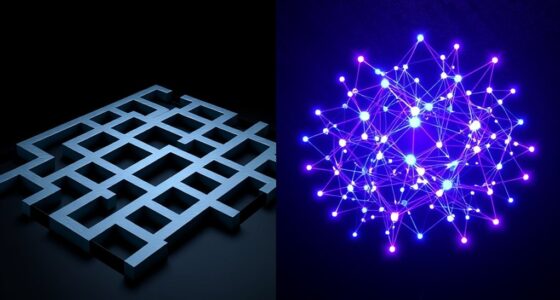

Testing AI outputs is like steering through a maze—you might miss hidden biases or overlook interpretability issues. Common pitfalls include ignoring bias detection, which skews results, or relying solely on surface-level metrics. You need to dig deeper with interpretability metrics to truly understand model behavior. Failing to do so leads to false confidence, potentially deploying unreliable models. Stay vigilant, question assumptions, and use exhaustive evaluation methods to avoid these pitfalls.

How Do I Compare Different AI Models Objectively?

To compare different AI models objectively, start by using benchmark datasets that provide a standard reference. Focus on evaluating model bias by analyzing how each model performs across diverse data segments. You should measure key metrics like accuracy, precision, and recall systematically. Avoid subjective judgments, and make certain you run consistent tests under similar conditions. This approach helps you identify the strengths and weaknesses of each model clearly and fairly.

What Ethical Considerations Are Involved in AI Testing?

Imagine walking a tightrope, balancing fairness and honesty. As you test AI, you must prioritize bias mitigation to prevent unfair outcomes and uphold transparency standards, ensuring your work remains accountable. Ethical considerations demand that you respect user privacy, avoid harmful biases, and clearly communicate how your models make decisions. By doing so, you build trust and promote responsible AI development, making sure technology serves everyone fairly and ethically.

Conclusion

By mastering these evaluation techniques, you’ll confidently navigate the subtle art of refining AI outputs. Instead of guessing, you’ll uncover valuable insights that help your models improve naturally over time. Embrace the journey of continuous learning, and watch your AI become more reliable and fair. Remember, every step you take toward better testing gently guides your technology toward its fullest potential—making the path both rewarding and exciting.