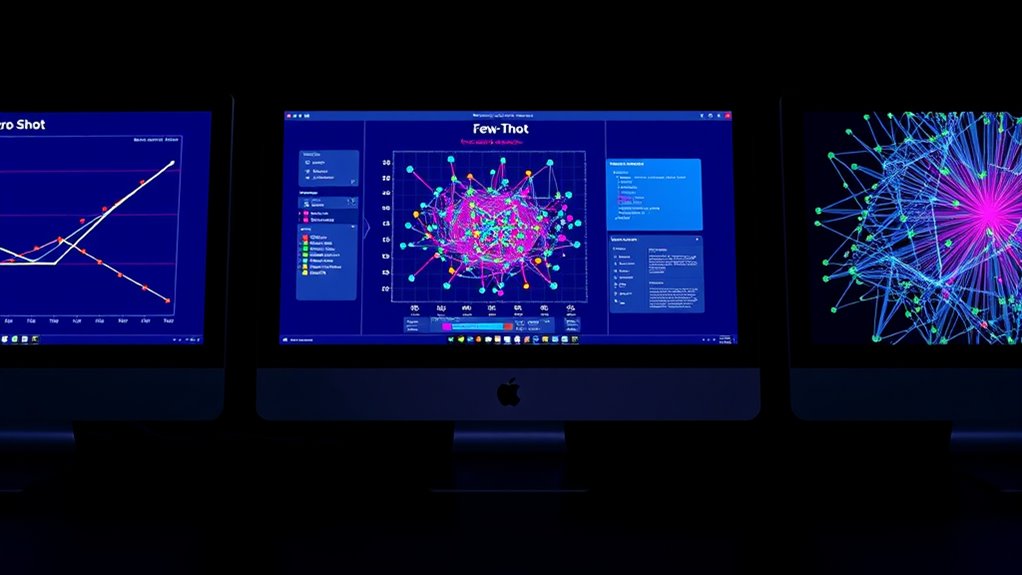

Zero-shot, few-shot, and fine-tuning differ mainly in data needs and flexibility. Zero-shot relies on pre-trained models to handle tasks without specific training data, making it quick and resource-efficient. Few-shot needs a small number of examples for better accuracy, offering more adaptability. Fine-tuning requires large datasets to customize models for specific tasks, boosting performance but demanding more resources. Exploring these differences helps you choose the best approach for your goals and constraints.

Key Takeaways

- Zero-shot learning requires no task-specific training data, relying on pre-trained knowledge for new tasks.

- Few-shot learning uses a small number of examples to adapt models with minimal additional training.

- Fine-tuning involves extensive training on large datasets to customize models for specific tasks.

- Zero-shot offers quick deployment and high generalization but lower interpretability, unlike fine-tuning.

- Fine-tuning provides higher accuracy and task-specific performance at the cost of increased data, time, and resource requirements.

Defining Zero-Shot, Few-Shot, and Fine-Tuning Approaches

Understanding the differences between zero-shot, few-shot, and fine-tuning approaches is essential when working with machine learning models. Zero-shot learning lets you use a model without any task-specific training, relying on its ability to generalize from existing knowledge. Few-shot learning requires only a few examples to adapt the model to new tasks, often maintaining high model interpretability. Fine-tuning involves updating the model with a large dataset specific to your goal, which can improve accuracy but may complicate interpretability and raise ethical considerations like bias. Each approach offers a different balance between flexibility, data requirements, and transparency. Recognizing these distinctions helps you choose the method that best aligns with your ethical standards and the need for clear, interpretable results. Additionally, understanding the support hours for related services can help plan your research and implementation phases more effectively.

The Role of Data Requirements in Each Method

The data requirements for zero-shot, few-shot, and fine-tuning methods vary considerably and directly impact their practicality. Zero-shot approaches need little to no task-specific data, relying on pre-trained models to generalize without additional dataset size or annotation cost. In contrast, few-shot methods require a small, carefully selected dataset, which increases annotation cost but keeps dataset size manageable. Fine-tuning demands a much larger dataset to update model weights effectively, leading to higher annotation costs and significant dataset size. The choice among these methods depends on your resource constraints and project goals. If data collection and annotation are limited, zero-shot or few-shot might be more feasible. However, for tasks demanding high accuracy, investing in a larger dataset for fine-tuning can be justified. Additionally, holistic approaches consider developmental stages and family dynamics, which may influence the amount and type of data needed for optimal results.

How Models Generalize Without Task-Specific Training

Models can achieve impressive performance without task-specific training by leveraging their ability to generalize from vast amounts of pre-existing knowledge. This is possible due to their scalability, which allows them to process diverse data and recognize patterns across different contexts. When you use large-scale models, they draw on prior training to make predictions even without explicit instructions for a specific task. However, this approach raises ethical considerations, like potential biases embedded in the training data and the risk of unintended consequences. Despite not needing specialized training, these models still require careful oversight to ensure responsible use. Additionally, ongoing research into AI security vulnerabilities helps identify and mitigate risks associated with deploying such models. Your goal is to balance the power of generalization with ethical awareness, ensuring that the model’s broad capabilities serve your needs without causing harm.

Adaptability and Flexibility of Different Techniques

You can see how well each technique transfers skills across tasks and adapts to new contexts. Zero-shot methods excel at applying knowledge quickly without specific training, but may struggle with nuanced adjustments. Few-shot and fine-tuning approaches offer greater flexibility when tailoring models to unique requirements. Additionally, adaptability features are crucial in determining how effectively a method can handle diverse and unforeseen challenges.

Transferability of Skills

When evaluating the transferability of skills, it’s vital to examine how adaptable and flexible each technique is across different tasks. Zero-shot methods excel by applying pre-trained knowledge without additional training, but may lack model interpretability, limiting understanding of decision processes. Few-shot techniques adapt quickly with minimal data, offering moderate flexibility but raising ethical considerations around data privacy. Fine-tuning allows extensive customization for specific tasks, enhancing transferability but risking overfitting and reduced generalization. To compare effectively, it is also important to consider the contrast ratio which plays a significant role in the quality of the output images and thus impacts the effectiveness of the trained models. – Zero-shot approaches require less task-specific data but may lack transparency. – Few-shot methods balance adaptability with ethical sensitivity. – Fine-tuning boosts performance on targeted tasks, yet can compromise interpretability. – All techniques must consider ethical implications when transferring skills across diverse domains.

Context Adaptation Capabilities

The ability of different techniques to adapt to new contexts varies markedly, shaping their flexibility across diverse tasks. Zero-shot methods excel in this area, allowing models to generalize without prior examples, but often at the expense of interpretability. Few-shot approaches strike a balance, offering some adaptability while maintaining clearer insights into model behavior. Fine-tuning provides the highest customization for specific contexts but reduces flexibility and can introduce ethical concerns if models are overfitted or biased. When evaluating these techniques, consider model interpretability to guarantee transparency, especially in sensitive applications. Ethical considerations play a vital role in selecting the appropriate method, as more adaptable models might unintentionally reinforce biases or produce undesired outcomes. Additionally, understanding the relationship between personality traits and model behavior can help tailor AI responses to better suit user needs. Ultimately, your choice depends on the task’s complexity, transparency needs, and ethical requirements.

Performance Expectations Across Various Tasks

You’ll notice that accuracy varies depending on the task and the method you choose. Data efficiency also plays a role, with some techniques requiring less data to perform well. As task complexity increases, performance expectations generally shift, impacting which approach works best. Additionally, understanding personal growth strategies can influence the effectiveness of model tuning in various contexts.

Accuracy Variations Across Tasks

Accuracy variations across tasks reveal that different learning approaches perform unevenly depending on the nature and complexity of the specific task. You’ll notice that some tasks, like straightforward classification, often benefit from fine-tuning, while zero-shot methods excel in language understanding but may lack nuance. Few-shot learning strikes a balance, offering decent accuracy with limited data. Keep in mind:

- Tasks demanding high model interpretability may favor fine-tuning for clarity.

- Ethical considerations influence the choice, especially when models handle sensitive data.

- Complex, domain-specific tasks often require more tailored approaches for better accuracy.

- Simpler tasks might succeed with zero-shot or few-shot, saving resources.

Understanding these accuracy variations helps you select the right approach, aligning model performance with task requirements and ethical standards.

Data Efficiency Levels

Different learning approaches excel under varying data efficiency levels, influencing how much data you need for effective performance. Zero-shot methods often require minimal data, relying on pre-trained knowledge, making them efficient but less transparent—affecting model interpretability. Few-shot learning improves accuracy with limited examples, balancing data use and clarity, but still raises ethical considerations around bias and fairness. Fine-tuning demands substantial data, offering higher performance and interpretability, yet it increases the risk of overfitting and bias. Your choice depends on task complexity, available data, and the importance of interpretability and ethics. Recognizing these data efficiency levels helps you set realistic expectations and guides responsible AI deployment across different applications. Additionally, understanding affiliate disclosures and privacy policies can help ensure transparency and ethical practices in AI-powered tools and content.

Task Complexity Impact

The effectiveness of zero-shot, few-shot, and fine-tuning approaches varies considerably depending on the complexity of the task at hand. For simple tasks, zero-shot may suffice, but as complexity grows, few-shot or fine-tuning often deliver better results. Complex tasks demand higher model interpretability to understand decision processes and ensure ethical considerations are met. Home improvement strategies can serve as practical examples of tasks that benefit from more tailored model approaches.

- Zero-shot models can struggle with nuanced or specialized tasks, risking misinterpretations.

- Few-shot learning offers a balance but still may lack detailed understanding for intricate problems.

- Fine-tuning improves performance on complex tasks but requires more data and computational resources.

- Increasing task complexity highlights the importance of model interpretability and ethical considerations to ensure responsible AI deployment.

Computational Resources and Time Investment

When choosing between zero-shot, few-shot, or fine-tuning approaches, the amount of computational resources and time required can vary considerably. Zero-shot methods demand minimal resources since they rely on pre-trained models without additional training, making them quick but often less interpretable. Few-shot learning requires moderate resources, as it involves providing a small number of examples, which can improve model interpretability and reduce training time. Fine-tuning, however, consumes significant computational power and time because it involves retraining the model on your specific data, raising ethical considerations around data bias and model transparency. Your choice depends on balancing available resources and the importance of interpretability and ethical implications, with fine-tuning offering more control at a higher resource cost. Additionally, understanding the shelf life of related data or models can help in planning the most efficient approach.

Use Cases and Practical Applications

Have you ever wondered which approach best suits your real-world needs? Zero-shot, few-shot, and fine-tuning each excel in different practical scenarios. Zero-shot models are ideal for rapid domain adaptation when you lack labeled data, enabling quick deployment across varied fields. Few-shot approaches work well when you have limited data but need better accuracy, especially useful in niche industries. Fine-tuning is perfect for situations demanding high model interpretability or specialized performance, like medical diagnosis. Consider these applications:

- Rapid deployment in new domains without retraining

- Customizing models with minimal labeled data

- Enhancing model transparency for sensitive tasks

- Improving accuracy in specialized fields with full training

- Understanding grocery store hours can help optimize shopping trips and ensure product availability.

Choosing the right method depends on your specific needs for domain adaptation and interpretability, ensuring practical effectiveness.

Advantages and Disadvantages of Each Strategy

Understanding the advantages and disadvantages of zero-shot, few-shot, and fine-tuning strategies helps you choose the right approach for your needs. Zero-shot models excel in flexibility and require minimal data but often lack interpretability, making it harder to understand their decision-making processes. Few-shot learning strikes a balance, needing some data to improve accuracy, but it can still pose challenges with model interpretability and may raise ethical considerations if the small dataset isn’t representative. Fine-tuning offers high accuracy and customization but demands significant resources and risks overfitting, which can impact model transparency. It also raises ethical concerns if the model learns biases from the training data. Additionally, understanding cultural and regional breakfast traditions can provide insights into diverse data sources and improve model cultural awareness. Weigh these pros and cons carefully to align your choice with your project’s interpretability requirements and ethical standards.

Frequently Asked Questions

How Do Zero-Shot, Few-Shot, and Fine-Tuning Compare in Real-World Scenarios?

In real-world scenarios, you’ll find that zero-shot models excel in adaptability with no extra data needed, making them quick to deploy. Few-shot models strike a balance, offering better performance with minimal data, boosting data efficiency. Fine-tuning requires more data but enhances model accuracy and customization. Your choice depends on your needs: for rapid adaptability, go zero-shot; for efficiency, consider few-shot; and for precision, fine-tuning is best.

What Are the Risks of Overfitting in Each Approach?

When worrying about overfitting, remember that model complexity and data sufficiency are key. In zero-shot, you risk overfitting if the model’s overly complex and data is insufficient, causing poor generalization. Few-shot learning can also overfit if too few examples are used, leading to memorization rather than learning. Fine-tuning, with more data, might overfit if the model becomes too tailored, losing flexibility. Stay strategic, balancing data and complexity to avoid overfitting pitfalls.

How Does Model Interpretability Vary Across These Techniques?

When considering model interpretability, you’ll find that zero-shot models often have lower transparency because they rely on pre-trained knowledge, making interpretability challenging. Few-shot models improve slightly but still face interpretability challenges due to limited task-specific data. Fine-tuned models typically offer better transparency because they adapt more specifically to your data, but complexity can still hinder clear understanding. Overall, your choice affects how easily you can interpret and trust the model’s decisions.

Can These Methods Be Combined for Improved Performance?

Did you know hybrid approaches and ensemble strategies can boost model performance? Combining zero-shot, few-shot, and fine-tuning methods lets you leverage their strengths. You can, for example, use zero-shot for initial insights, then fine-tune for specific tasks, and ensemble their outputs for better accuracy. This flexible strategy helps you adapt models to complex problems, improving results without sacrificing interpretability or efficiency.

What Are the Ethical Considerations Associated With Each Approach?

When considering the ethical aspects, you should focus on bias mitigation, as each approach can inadvertently reinforce stereotypes or unfair biases. Privacy concerns are also critical, especially with fine-tuning that involves sensitive data, risking data leaks. Zero-shot and few-shot methods may reduce privacy risks but still require careful handling to prevent bias. Overall, you must balance performance with ethical responsibility to guarantee fair, respectful AI use.

Conclusion

So, whether you prefer zero, few, or fine-tuning, just remember they all come with their quirks. Zero-shot‘s the magic trick—impressive, but often unpredictable. Few-shot gives you a tiny safety net, while fine-tuning demands your soul (and resources). Choose wisely, or you’ll end up like the guy who thought “training” was just hitting “update.” In the end, it’s all about balancing your needs—no crystal balls included.